Why securing AI agents requires a new approach

AI agents do not behave like traditional software. Securing them means moving beyond access and protocols to govern autonomous systems in real time.

What are AI agents?

AI agents are like digital employees, with roles, access, data, and the ability to make decisions in pursuit of goals in real time. The minimum viable definition of an agent is a large language model equipped with at least one tool. The tool can be anything from an API to an MCP to a SaaS connector.

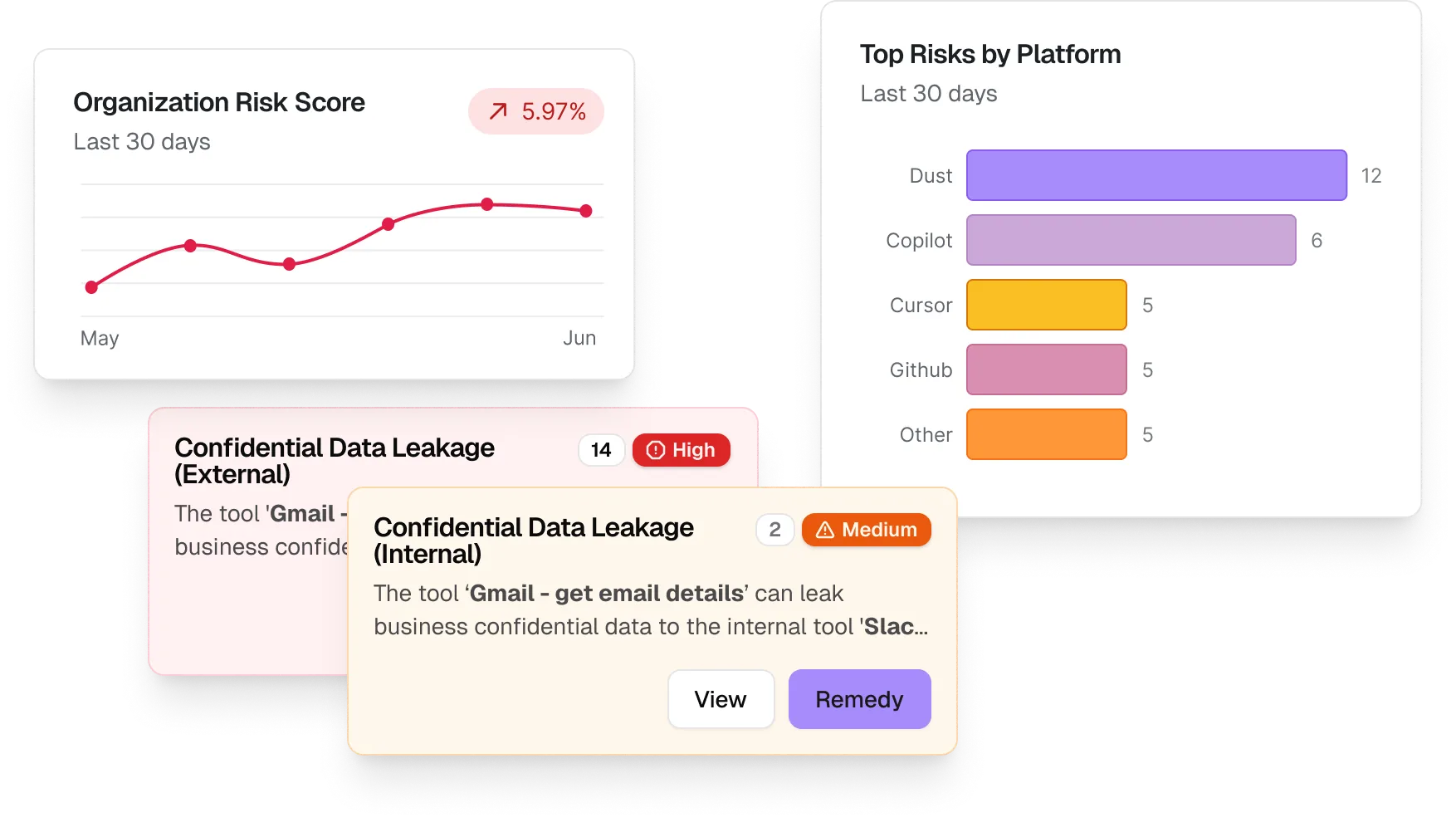

What are agentic risks?

Agents amplify existing risks while also creating new blind spots.

- Identity hijacking through delegated credentials

- Prompt injection into Generative AI systems

- Data exfiltration through unsafe tool use or memory corruption

Agents can produce unsafe or incorrect outcomes even when no system is breached and no permissions change. Risk emerges when context, tools, or coordination subtly drift in ways traditional controls cannot detect.

- Inference drift from context corruption, where poisoned memory entries or contaminated retrieved context shape future decisions

- Tool impersonation within workflows, caused by look-alike tools, outdated references, or ambiguous capability descriptions

- Silent failure through partial task completion, where agents report success despite skipped steps or degraded outcome

Geordie is built to secure agents however they're built and wherever they live, whether they're:

Agents fail silently.

Agents make decisions continuously, adapt to context, and operate across systems within a fixed perimeter. Risk doesn't appear as a single event, it emerges over time.

Beam is Geordie’s risk mitigation engine that contextually guides agent decisions in real time, keeping actions aligned with enterprise policies.

Core platform capabilities

Continuous visibility across your agentic footprint

Understand what your agents are

doing in real time

Proactive risk mitigation with Beam

Geordie's architecture collects and correlates data from three critical vantage points: code, cloud, and the endpoint.

Geordie maps to international and business-specific frameworks with behavioral context and continous verification

- EU AI Act

- OSWAP Agentic Top Ten

- ISO 42001

- NIST AI RMF

- OECD AI

From gateways to behavioral governance

MCP Gateways | Protocol Layer

- Protocol-layer tool mediation and traffic control

- Visibility ends at the transaction boundary

- Governs access, not agent behavior across workflows

Geordie | Behavioral Layer

- Agentic fingerprinting across code, cloud, and the endpoint

- End-to-end behavioral telemetry across workflows

- Beam for real-time contextual governance preventing unsafe actions

.svg)